[ad_1]

That is Bare Capitalism fundraising week. 735 donors have already invested in our efforts to fight corruption and predatory conduct, significantly within the monetary realm. Please be a part of us and take part by way of our donation web page, which exhibits learn how to give by way of test, bank card, debit card, PayPal, Clover, or Clever. Examine why we’re doing this fundraiser, what we’ve completed within the final yr, and our present objective, supporting our expanded each day Hyperlinks

Yves right here. It is a devastating, must-read paper by Servaas Storm on how AI is failing to fulfill core, repeatedly hyped efficiency guarantees, and by no means can, no matter how a lot cash and computing energy is thrown at it. But AI, which Storm calls “Synthetic Data” remains to be garnering worse-than-dot-com-frenzy valuations whilst errors are if something rising.

By Servaas Storm, Senior Lecturer of Economics, Delft College of Expertise. Initially revealed at the Institute for New Financial Considering web site

This paper argues that (i) we now have reached “peak GenAI” by way of present Massive Language Fashions (LLMs); scaling (constructing extra knowledge facilities and utilizing extra chips) won’t take us additional to the objective of “Synthetic Common Intelligence” (AGI); returns are diminishing quickly; (ii) the AI-LLM trade and the bigger U.S. financial system are experiencing a speculative bubble, which is about to burst.

The U.S. is present process a rare AI-fueled financial growth: The inventory market is hovering due to exceptionally excessive valuations of AI-related tech corporations, that are fueling financial progress by the lots of of billions of U.S. {dollars} they’re spending on knowledge facilities and different AI infrastructure. The AI funding growth relies on the idea that AI will make staff and corporations considerably extra productive, which is able to in flip increase company income to unprecedented ranges. However the summer time of 2025 didn’t carry excellent news for lovers of generative Synthetic Intelligence (GenAI) who have been all overvalued by the inflated promise of the likes of OpenAI’s Sam Altman that “Synthetic Common Intelligence” (AGI), the holy grail of present AI analysis, can be proper across the nook.

Allow us to extra carefully think about the hype. Already in January 2025, Altman wrote that “we at the moment are assured we all know learn how to construct AGI”. Altman’s optimism echoed claims by OpenAI’s companion and main monetary backer Microsoft, which had put out a paper in 2023 claiming that the GPT-4 mannequin already exhibited “sparks of AGI.” Elon Musk (in 2024) was equally assured that the Grok mannequin developed by his firm xAI would attain AGI, an intelligence “smarter than the neatest human being”, in all probability by 2025 or a minimum of by 2026. Meta CEO Mark Zuckerberg stated that his firm was dedicated to “constructing full common intelligence”, and that super-intelligence is now “in sight”. Likewise, Dario Amodei, co-founder and CEO of Anthropic, stated “highly effective AI”, i.e., smarter than a Nobel Prize winner in any discipline, may come as early as 2026, and usher in a brand new age of well being and abundance — the U.S. would change into a “nation of geniuses in a datacenter”, if ….. AI didn’t wind up killing us all.

For Mr. Musk and his GenAI fellow vacationers, the largest hurdle on the street to AGI is the shortage of computing energy (put in in knowledge facilities) to coach AI bots, which, in flip, is because of an absence of sufficiently superior pc chips. The demand for extra knowledge and extra data-crunching capabilities would require about $3 trillion in capital simply by 2028, within the estimation of Morgan Stanley. That might exceed the capability of the worldwide credit score and spinoff securities markets. Spurred by the crucial to win the AI-race with China, the GenAI propagandists firmly consider that the U.S. might be placed on the yellow brick street to the Emerald Metropolis of AGI by constructing extra knowledge facilities sooner (an unmistakenly “accelerationist” expression).

Curiously, AGI is an ill-defined notion, and maybe extra of a advertising and marketing idea utilized by AI promotors to steer their financiers to spend money on their endeavors. Roughly, the concept is that an AGI mannequin can generalize past particular examples present in its coaching knowledge, just like how some human beings can do nearly any sort of work after having been proven just a few examples of learn how to do a job, by studying from expertise and altering strategies when wanted. AGI bots will likely be able to outsmarting human beings, creating new scientific concepts, and doing modern in addition to all of routine coding. AI bots will likely be telling us learn how to develop new medicines to remedy most cancers, repair international warming, drive our vehicles and develop our genetically modified crops. Therefore, in a radical bout of inventive destruction, AGI would remodel not simply the financial system and the office, but additionally methods of well being care, power, agriculture, communications, leisure, transportation, R&D, innovation and science.

OpenAI’s Altman boasted that AGI can “uncover new science,” as a result of “I feel we’ve cracked reasoning within the fashions,” including that “we’ve an extended strategy to go.” He “suppose[s] we all know what to do,” saying that OpenAI’s o3 mannequin “is already fairly good,” and that he’s heard individuals say “wow, this is sort of a good PhD.” Saying the launch of ChatGPT-5 in August, Mr. Altman posted on the web that “We predict you’ll love utilizing GPT-5 rather more than any earlier Al. It’s helpful, it’s good, it’s quick [and] intuitive. With GPT-5 now, it’s like speaking to an professional — a professional PhD stage professional in something any space you want on demand, they will help you with no matter your objectives are.”

However then issues started to crumble, and fairly rapidly so.

ChatGPT-5 Is a Letdown

The primary piece of dangerous information is that much-hyped ChatGPT-5 turned out to be a dud — incremental enhancements wrapped in a routing structure, nowhere close to the breakthrough to AGI that Sam Altman had promised. Customers are underwhelmed. Because the MIT Expertise Evaluate studies: “The much-hyped launch makes a number of enhancements to the ChatGPT consumer expertise. But it surely’s nonetheless far in need of AGI.” Worryingly, OpenAI’s inside exams present GPT-5 ‘hallucinates’ in circa one in 10 responses of the time on sure factual duties, when related to the web. Nevertheless, with out web-browsing entry, GPT-5 is incorrect in nearly 1 in 2 responses, which ought to be troublesome. Much more worrisome, ‘hallucinations’ might also mirror biases buried inside datasets. For example, an LLM may ‘hallucinate’ crime statistics that align with racial or political biases just because it has discovered from biased knowledge.

Of observe right here is that AI chatbots might be and are actively used to unfold misinformation (see right here and right here). In response to current analysis, chatbots unfold false claims when prompted with questions on controversial information matters 35% of the time — nearly double the 18% fee of a yr in the past (right here). AI curates, orders, presents, and censors data, influencing interpretation and debate, whereas pushing dominant (common or most well-liked) viewpoints whereas suppressing options, quietly eradicating inconvenient info or making up handy ones. The important thing situation is: Who controls the algorithms? Who units the principles for the tech bros? It’s evident that by making it simple to unfold “realistic-looking” misinformation and biases and/or suppress important proof or argumentation, GenAI does and could have non-negligible societal prices and dangers — which should be counted when assessing its impacts.

Constructing Bigger LLMs Is Main Nowhere

The ChatGPT-5 episode raises severe doubts and existential questions on whether or not the GenAI trade’s core technique of constructing ever-larger fashions on ever-larger knowledge distributions has already hit a wall. Critics, together with cognitive scientist Gary Marcus (right here and right here), have lengthy argued that merely scaling up LLMs won’t result in AGI, and GPT-5’s sorry stumbles do validate these considerations. It’s changing into extra extensively understood that LLMs will not be constructed on correct and sturdy world fashions, however as an alternative are constructed to autocomplete, primarily based on refined pattern-matching — which is why, for instance, they nonetheless can not even play chess reliably and proceed to make mind-boggling errors with startling regularity.

My new INET Working Paper discusses three sobering analysis research exhibiting that novel ever-larger GenAI fashions don’t change into higher, however worse, and don’t motive, however fairly parrot reasoning-like textual content. For instance, a current paper by scientists at MIT and Harvard exhibits that even when skilled on all of physics, LLMs fail to uncover even the prevailing generalized and common bodily ideas underlying their coaching knowledge. Particularly, Vafa et al. (2025) observe that LLMs that comply with a “Kepler-esque” method: they’ll efficiently predict the following place in a planet’s orbit, however fail to search out the underlying clarification of Newton’s Legislation of Gravity (see right here). As an alternative, they resort to becoming made-up guidelines, that enable them to efficiently predict the planet’s subsequent orbital place, however these fashions fail to search out the power vector on the coronary heart of Newton’s perception. The MIT-Harvard paper is defined in this video. LLMs can not and don’t infer bodily legal guidelines from their coaching knowledge. Remarkably, they can’t even establish the related data from the web. As an alternative, they make it up.

Worse, AI bots are incentivized to guess (and provides an incorrect response) fairly than admit they have no idea one thing. This drawback is acknowledged by researchers from OpenAI in a current paper. Guessing is rewarded — as a result of, who is aware of, it may be proper. The error is at current uncorrectable. Accordingly, it’d properly be prudent to consider “Synthetic Data” fairly than “Synthetic Intelligence” when utilizing the acronym AI. The bottom line is simple: that is very dangerous information for anybody hoping that additional scaling — constructing ever bigger LLMs — would result in higher outcomes (see additionally Che 2025).

95% of Generative AI Pilot Initiatives in Firms Are Failing

Companies had rushed to announce AI investments or declare AI capabilities for his or her merchandise within the hope of turbocharging their share costs. Then got here the information that the AI instruments will not be doing what they’re presupposed to do and that individuals are realizing it (see Ed Zitron). An August 2025 report titled The GenAI Divide: State of AI in Enterprise 2025, revealed by MIT’s NANDA initiative, concludes that 95% of generative AI pilot initiatives in firms are failing to boost income progress. As reported by Fortune, “generic instruments like ChatGPT [….] stall in enterprise use since they don’t be taught from or adapt to workflows”. Fairly.

Certainly, corporations are backpedaling after reducing lots of of jobs and changing these by AI. For example, Swedish “Purchase Burritos Now, Pay Later” Klarna bragged in March 2024 that its AI assistant was doing the work of (laid-off) 700 staff, solely to rehire them (sadly, as gig staff) in the summertime of 2025 (see right here). Different examples embody IBM, compelled to reemploy employees after shedding about 8,000 staff to implement automation (right here). Latest U.S. Census Bureau knowledge by agency measurement present that AI adoption has been declining amongst firms with greater than 250 staff.

MIT economist Daren Acemoglu (2025) predicts fairly modest productiveness impacts of AI within the subsequent 10 years and warns that some functions of AI might have adverse social worth. “We’re nonetheless going to have journalists, we’re nonetheless going to have monetary analysts, we’re nonetheless going to have HR staff,” Acemoglu says. “It’s going to affect a bunch of workplace jobs which might be about knowledge abstract, visible matching, sample recognition, and many others. And people are primarily about 5% of the financial system.” Equally, utilizing two large-scale AI adoption surveys (late 2023 and 2024) masking 11 uncovered occupations (25,000 staff in 7,000 workplaces) in Denmark, Anders Humlum and Emilie Vestergaard (2025) present, in a current NBER Working Paper, that the financial impacts of GenAI adoption are minimal: “AI chatbots have had no important affect on earnings or recorded hours in any occupation, with confidence intervals ruling out results bigger than 1%. Modest productiveness good points (common time financial savings of three%), mixed with weak wage pass-through, assist clarify these restricted labor market results.” These findings present a much-needed actuality test for the hyperbole that GenAI is coming for all of our jobs. Actuality isn’t even shut.

GenAI won’t even make tech staff who do the coding redundant, opposite to the prediction by AI lovers. OpenAI researchers have discovered (in early 2025) that superior AI fashions (together with GPT-4o and Anthropic’s Claude 3.5 Sonnet) nonetheless aren’t any match for human coders. The AI bots failed to understand how widespread bugs are or to know their context, resulting in options which might be incorrect or insufficiently complete. One other new examine from the nonprofit Mannequin Analysis and Risk Analysis (METR) finds that in apply, programmers, utilizing early 2025-AI-tools, are literally slower when utilizing AI help instruments, spending 19 % extra time when utilizing GenAI than when actively coding by themselves (see right here). Programmers spent their time on reviewing AI outputs, prompting AI methods, and correcting AI-generated code.

The U.S. Economic system at Massive Is Hallucinating

The disappointing rollout of ChatGPT-5 raises doubts about OpenAI’s skill to construct and market shopper merchandise that customers are prepared to pay for. However the level I wish to make isn’t just about OpenAI: the American AI trade as a complete has been constructed on the premise that AGI is simply across the nook. All that’s wanted is enough “compute”, i.e., tens of millions of Nvidia AI GPUs, sufficient knowledge facilities and enough low cost electrical energy to do the huge statistical sample mapping wanted to generate (a semblance of) “intelligence”. This, in flip, implies that “scaling” (investing billions of U.S. {dollars} in chips and knowledge facilities) is the one-and-only method ahead — and that is precisely what the tech corporations, Silicon Valley enterprise capitalists and Wall Road financiers are good at: mobilizing and spending funds, this time for “scaling-up” generative AI and constructing knowledge facilities to help all of the anticipated future demand for AI use.

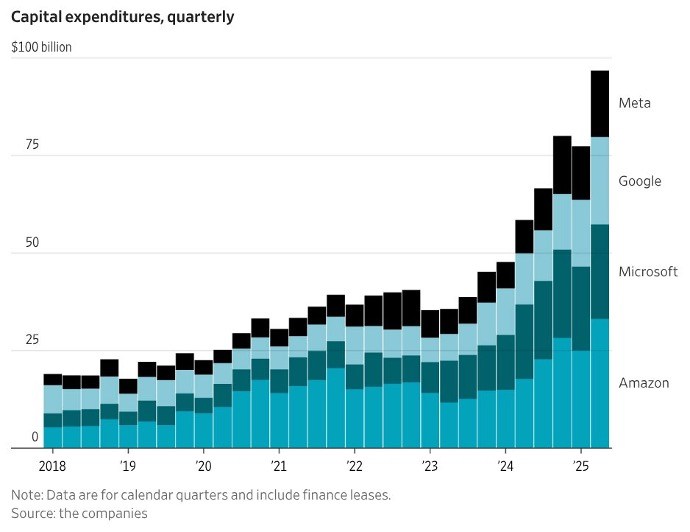

Throughout 2024 and 2025, Huge Tech corporations invested a staggering $750 billion in knowledge facilities in cumulative phrases and so they plan to roll out a cumulative funding of $3 trillion in knowledge facilities throughout 2026-2029 (Thornhill 2025). The so-called “Magnificent 7” (Alphabet, Apple, Amazon, Meta, Microsoft, Nvidia, and Tesla) spent greater than $100 billion on knowledge facilities within the second quarter of 2025; Determine 1 offers the capital expenditures for 4 of the seven companies.

FIGURE 1

Christopher Mims (2025), https://x.com/mims/standing/1951…

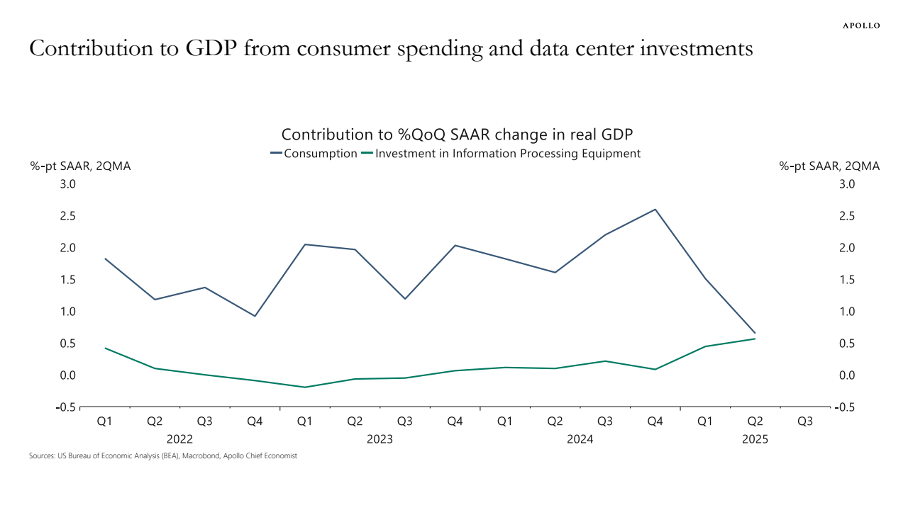

The surge in company funding in “data processing gear” is big. In response to Torsten Sløk, chief economist at Apollo International Administration, knowledge heart investments’ contribution to (sluggish) actual U.S. GDP progress has been the identical as shopper spending over the primary half of 2025 (Determine 2). Monetary investor Paul Kedrosky finds that capital expenditures on AI knowledge facilities (in 2025) have handed the height of telecom spending through the dot-com bubble (of 1995-2000).

FIGURE 2

Supply: Torsten Sløk (2025). https://www.apolloacademy.com/…

Following the AI hype and hyperbole, tech shares have gone via the roof. The S&P500 Index rose by circa 58% throughout 2023-2024, pushed largely by the expansion of the share costs of the Magnificent Seven. The weighted-average share worth of those seven companies elevated by 156% throughout 2023-2024, whereas the opposite 493 corporations skilled a mean enhance of their share costs of simply 25%. America’s inventory market is basically AI-driven.

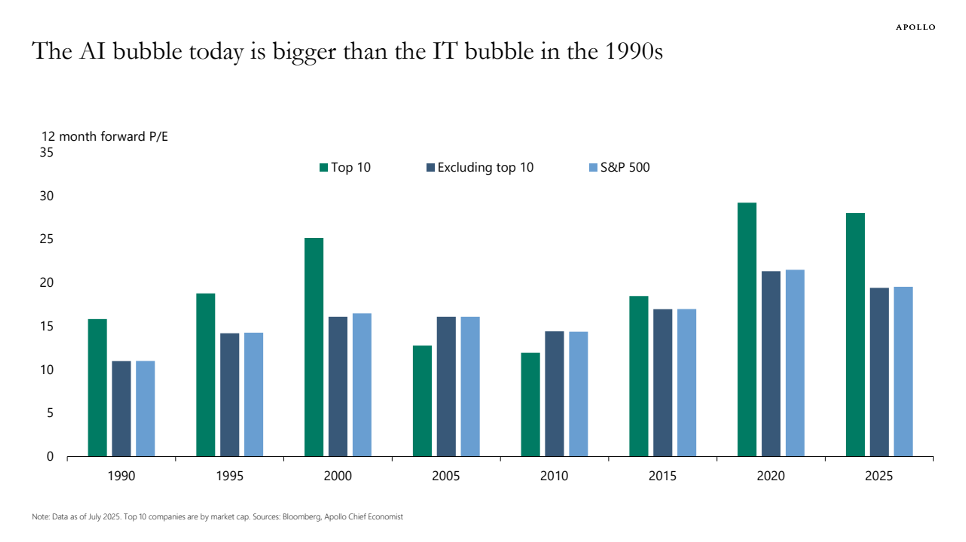

Nvidia’s shares rose by greater than 280% over the previous two years amid the exploding demand for its GPUs coming from the AI corporations; as one of the high-profile beneficiaries of the insatiable demand for GenAI, Nvidia now has a market capitalization of greater than $4 trillion, which is the best valuation ever recorded for a publicly traded firm. Does this valuation make sense? Nvidia’s price-earnings (P/E) ratio peaked at 234 in July 2023 and has since declined to 47.6 in September 2025 — which remains to be traditionally very excessive (see Determine 3). Nvidia is promoting its GPUs to neocloud firms (equivalent to CoreWeave, Lambda, and Nebius), that are funded by credit score, from Goldman Sachs, JPMorgan, Blackstone and different Wall Road personal fairness corporations, collateralized by the information facilities stuffed with GPUs. In key circumstances, as defined by Ed Zitron, Nvidia supplied the neocloud firms, that are loss making, to purchase unsold cloud compute price billions of U.S. {dollars}, successfully backstopping its purchasers — all within the expectation of an AI revolution that also has to reach.

Likewise, the share worth of Oracle Corp. (which isn’t included within the “Magnificent 7”) rose by greater than 130% throughout mid-Could and early September 2025 following the announcement of its $300 billion cloud-computing infrastructure take care of OpenAI. Oracle’s P/E ratio shot as much as nearly 68, which implies that monetary traders are prepared to pay nearly $68 for $1 of Oracle’s future earnings. One apparent drawback with this deal is that OpenAI doesn’t have $300 billion; the corporate made a lack of $15 billion throughout 2023-2025 and is projected to make an additional cumulative lack of $28 billion throughout 2026-2028 (see beneath). It’s unclear and unsure the place OpenAI will get the cash from. Ominously, Oracle must construct the infrastructure for OpenAI earlier than it could gather any income. If OpenAI can not pay for the large computing capability it agreed to purchase from Oracle, which appears seemingly, Oracle will likely be left with the costly AI infrastructure, for which it could not be capable to discover different prospects, particularly as soon as the AI bubble fizzles out.

Tech shares thus are significantly overvalued. Torsten Sløk, chief economist at Apollo International Administration, warned (in July 2025) that AI shares are much more overvalued than dot-com shares have been in 1999. In a blogpost, he illustrates how P/E ratios of Nvidia, Microsoft and eight different tech firms are increased than through the dot-com period (see Determine 3). All of us bear in mind how the dot-com bubble ended — and therefore Sløk is correct in sounding the alarm over the obvious market mania, pushed by the “Magnificent 7” which might be all closely invested within the AI trade.

Huge Tech doesn’t purchase these knowledge facilities and function them itself; as an alternative the information facilities are constructed by development firms after which bought by knowledge heart operators who lease them to (say) OpenAI, Meta or Amazon (see right here). Wall Road personal fairness corporations equivalent to Blackstone and KKR are investing billions of {dollars} to purchase up these knowledge heart operators, utilizing industrial mortgage-backed securities as supply funding. Knowledge heart actual property is a brand new, hyped-up asset class that’s starting to dominate monetary portfolios. Blackstone calls knowledge facilities considered one of its “highest conviction investments.” Wall Road loves the lease-contracts of information facilities which supply long-term steady, predictable earnings, paid by AAA-rated purchasers like AWS, Microsoft and Google. Some Cassandras are warning of a possible oversupply of information facilities, however provided that “the longer term will likely be primarily based on GenAI”, what may probably go incorrect?

FIGURE 3

Supply: Torsten Sløk (2025), https://www.apolloacademy.com/…

In a uncommon second of frankness, OpenAI CEO Sam Altman had it proper. “Are we in a section the place traders as a complete are overexcited about AI?” Altman stated throughout a dinner interview with reporters in San Francisco in August. “My opinion is sure.” He additionally in contrast at the moment’s AI funding frenzy to the dot-com bubble of the late Nineties. “Somebody’s gonna get burned there, I feel,” Altman stated. “Somebody goes to lose an exceptional amount of cash – we don’t know who …”, however (going by what occurred in earlier bubbles) it can almost definitely not be Altman himself.

The query subsequently is: How lengthy traders will proceed to prop up sky-high valuations of the important thing corporations within the GenAI race stays to be seen. Earnings of the AI trade proceed to pale compared to the tens of billions of U.S. {dollars} which might be spent on knowledge heart progress. In response to an upbeat S&P International analysis observe revealed in June, 2025 the GenAI market is projected to generate $85 billion in income 2029. Nevertheless, Alphabet, Google, Amazon and Meta collectively will spend almost $400 billion on capital expenditures in 2025 alone. On the identical time, the AI trade has a mixed income that’s little greater than the income of the smart-watch trade (Zitron 2025).

So, what if GenAI simply isn’t worthwhile? This query is pertinent in view of the quickly diminishing returns to the stratospheric capital expenditures on GenAI and knowledge facilities and the disappointing user-experience of 95% of corporations that adopted AI. One of many largest hedge funds on this planet, Florida-based Elliott, advised purchasers that AI is overhyped and Nvidia is in a bubble, including that many AI merchandise are “by no means going to be cost-efficient, by no means going to truly work proper, will take up an excessive amount of power, or will show to be untrustworthy.” “There are few actual makes use of,” it stated, apart from “summarizing notes of conferences, producing studies and serving to with pc coding”. It added that it was “skeptical” that Huge Tech firms would maintain shopping for the chipmaker’s graphics processing items in such excessive volumes.

Locking billions of U.S. {dollars} in into AI-focused knowledge facilities with no clear exit technique for these investments in case the AI craze ends, solely implies that systemic danger in finance and the financial system is constructing. With data-center investments driving U.S. financial progress, the American financial system has change into depending on a handful of companies, which haven’t but managed to generate one greenback of revenue on the ‘compute’ achieved by these knowledge heart investments.

America’s Excessive-Stakes Geopolitical Get Gone Improper

The AI growth (bubble) developed with the help of each main political events within the U.S. The imaginative and prescient of American corporations pushing the AI frontier and reaching GenAI first is extensively shared — in actual fact, there’s a bipartisan consensus on how essential it’s that the U.S. ought to win the worldwide AI race. America’s industrial functionality is critically depending on a variety of potential adversary nation-states, together with China. On this context, America’s lead in GenAI is taken into account to represent a possible very highly effective geopolitical lever: If America manages to get to AGI first, so the evaluation goes, it could construct up an awesome long-term benefit over particularly China (see Farrell).

That’s the reason why Silicon Valley, Wall Road and the Trump administration are doubling down on the “AGI First” technique. However astute observers spotlight the prices and dangers of this technique. Prominently, Eric Schmidt and Selina Xufear, within the New York Instances of August 19, 2025, that “Silicon Valley has grown so enamored with engaging in this objective [of AGI] that it’s alienating most of the people and, worse, bypassing essential alternatives to make use of the know-how that already exists. In being solely fixated on this goal, our nation dangers falling behind China, which is way much less involved with creating A.I. highly effective sufficient to surpass people and rather more targeted on utilizing the know-how we now have now.”

Schmidt and Xu are rightly anxious. Maybe the plight of the U.S. financial system is captured greatest by OpenAI’s Sam Altman who fantasizes about placing his knowledge facilities in area: “Like, perhaps we construct an enormous Dyson sphere across the photo voltaic system and say, “Hey, it really is senseless to place these on Earth.”” For so long as such ‘hallucinations’ on utilizing solar-collecting satellites to reap (limitless) star energy proceed to persuade gullible monetary traders, the federal government and customers of the “magic” of AI and the AI trade, the U.S. financial system is unquestionably doomed.

[ad_2]