[ad_1]

Yves right here. Servaas Storm supplies a implausible broad and correctly sobering view on the AI/inventory market mania and the far too many the explanation why US gamers can’t probably ship on their hype.

One tiny quibble, extra of presentation: Storm discusses AI borrowing, initially making it sound as whether it is being executed by the massive gamers themselves, which to a point it has been. He then does describe how they’re more and more making use of off stability sheet automobiles, and to date, traders are bizarrely eager about them.

I’ve not checked out any of those constructions, however marvel how off-balance-sheet they’ll show to be in observe. In the course of the monetary disaster, banks who had been securitizing bank card receivables discovered lenders had been making them eat a number of the losses, regardless of the entities having been structured in order to be arm’s size. The funders mentioned, successfully: Strive promoting us one other one. The banks relied on with the ability to off-load the receivables (they might not afford the capital prices of retaining them) and so relented.

In at the very least one data-center deal, Meta had signed up for 4 successive 5 12 months leases. After I was as child, we’d capitalize this kind of dedication as an working lease and deal with it as debt. That doesn’t appear to be fashionable observe. What occurs if the underlying enterprise comes a cropper? Does Meta attempt to default on the lease funds?

By Servaas Storm, Senior Lecturer of Economics, Delft College of Know-how. Initially printed at the Institute for New Financial Pondering web site

Introduction

Three years in the past, on November 30, 2022, ChatGPT was launched to the general public. This Giant Language Mannequin (LLM) was a complete novelty, an AI device that appeared capable of do issues that nobody believed to be potential. Inside 5 days of launch, over a million customers had signed as much as chat with the AI bot – a progress charge 30 occasions quicker than Instagram’s and 6 occasions quicker than TikTok’s at their begin. It turned the fastest-growing shopper product in historical past, recording greater than 800 million weekly customers in October 2025. OpenAI, the start-up that developed ChatGPT and that started as a non-profit in 2015, turned a household-name virtually in a single day, and is now valued at $500 billion and even $1 trillion (for an preliminary public providing). Browsing the LLM wave, Nvidia, the producer of round 94% of the GPUs wanted by the AI trade, hit, as the primary public company ever, a market capitalization of $5 trillion on October 29, 2025, up from simply $0.4 trillion in 2022. Nvidia presently has a weight of round 8.5% within the S&P500 Index, whereas the so-called ‘Magnificent 7’ (Alphabet, Amazon, Apple, Meta, Microsoft, Nvidia and Tesla) have a mixed weight within the S&P500 of circa 37%.

The info-centre investments by a concentrated set of hyper-scalers within the American AI trade represent the primary supply of progress in an in any other case sclerotic U.S. economic system. The U.S. is betting the economic system on reaching Synthetic Normal Intelligence (AGI), by constructing ever greater computing infrastructure to run and test-time their LLMs, utilizing extra GPUs, extra energy, extra cooling water and extra information than ever earlier than.

However three years after ChatGPT’s launch, fears are rising in lots of quarters that the wager on scaling LLMs to succeed in AGI goes incorrect. The AI growth, many whisper, could also be a bubble. The Trump administration and the AI trade are displaying indicators of nervousness. At a latest Wall Avenue Journal tech convention, OpenAI Chief Monetary Officer Sarah Friar prompt {that a} authorities mortgage assure may be essential to fund the big investments wanted to maintain the corporate on the innovative. Her coded message was that OpenAI has develop into TBTF. The message was understood. President Trump’s AI and crypto czar, David Sacks mentioned (in response) {that a} reversal in AI-related investments would danger a recession. “We are able to’t afford to go backwards,” he added, indication help for Friar’s demand. (He later clarified that he stays against any bailouts for particular person corporations within the AI sector.)

Historical past Rhymes, Or Is This Time Completely different?

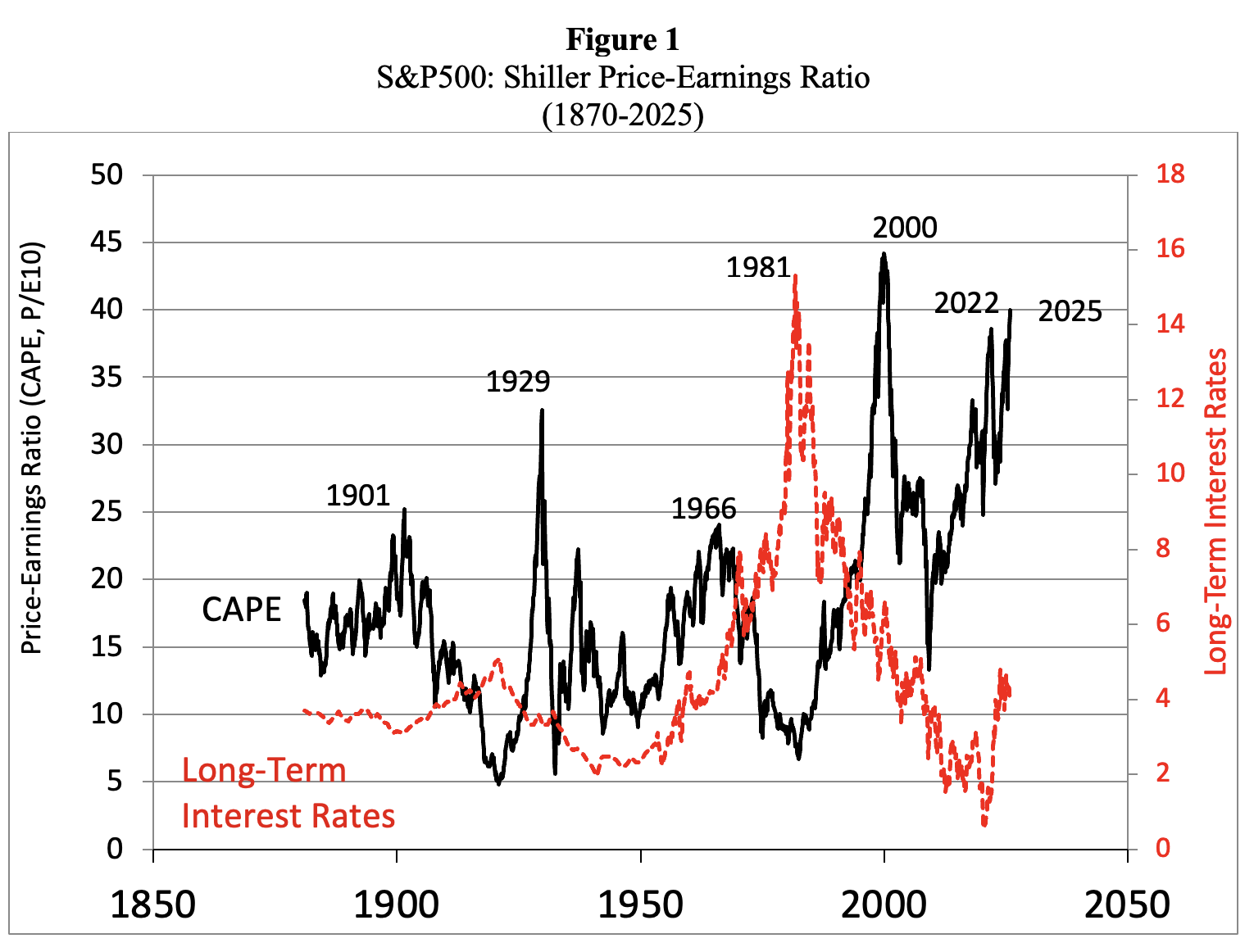

The U.S. inventory market is unquestionably firmly in bubble territory. Determine 1 presents information on the S&P 500’s Shiller P/E Ratio, calculated as common inflation-adjusted earnings from the earlier 10 years. Traditionally, a Shiller P/E Ratio above 30 has been a harbinger of speculative extra, adopted by a bear market. In December 2023, the Shiller index rose to 30.45 and has remained above 30 ever since; in November 2025, the Shiller P/E ratio rose above 40.

Since 1871, that is solely the sixth occasion through which the CAPE Ratio exceeded 30. The primary time it occurred was throughout August-September 1929 and everyone knows what got here subsequent: the Dow Jones Industrial Common misplaced 89% of its worth. The second time it occurred occurred virtually seven a long time later: through the end-of-the-millennium dotcom bubble, when the Shiller P/E ratio recorded an all-time excessive of 44.19 in December 1999. Following the bursting of the dot-com bubble, the S&P 500 misplaced 49% of its peak worth.

The subsequent three peaks above 30 within the Shiller P/E Ratio occurred very lately: throughout September 2017-November 2018; December 2019-February 2020; and August 2020-Could 2022. Following these surges, the S&P 500 finally dropped by anyplace between 20% and 33%. We’re presently residing within the sixth such interval of speculative extra.

Supply: Robert Shiller (2025), https://shillerdata.com/ (accessed on 15/11/2025)

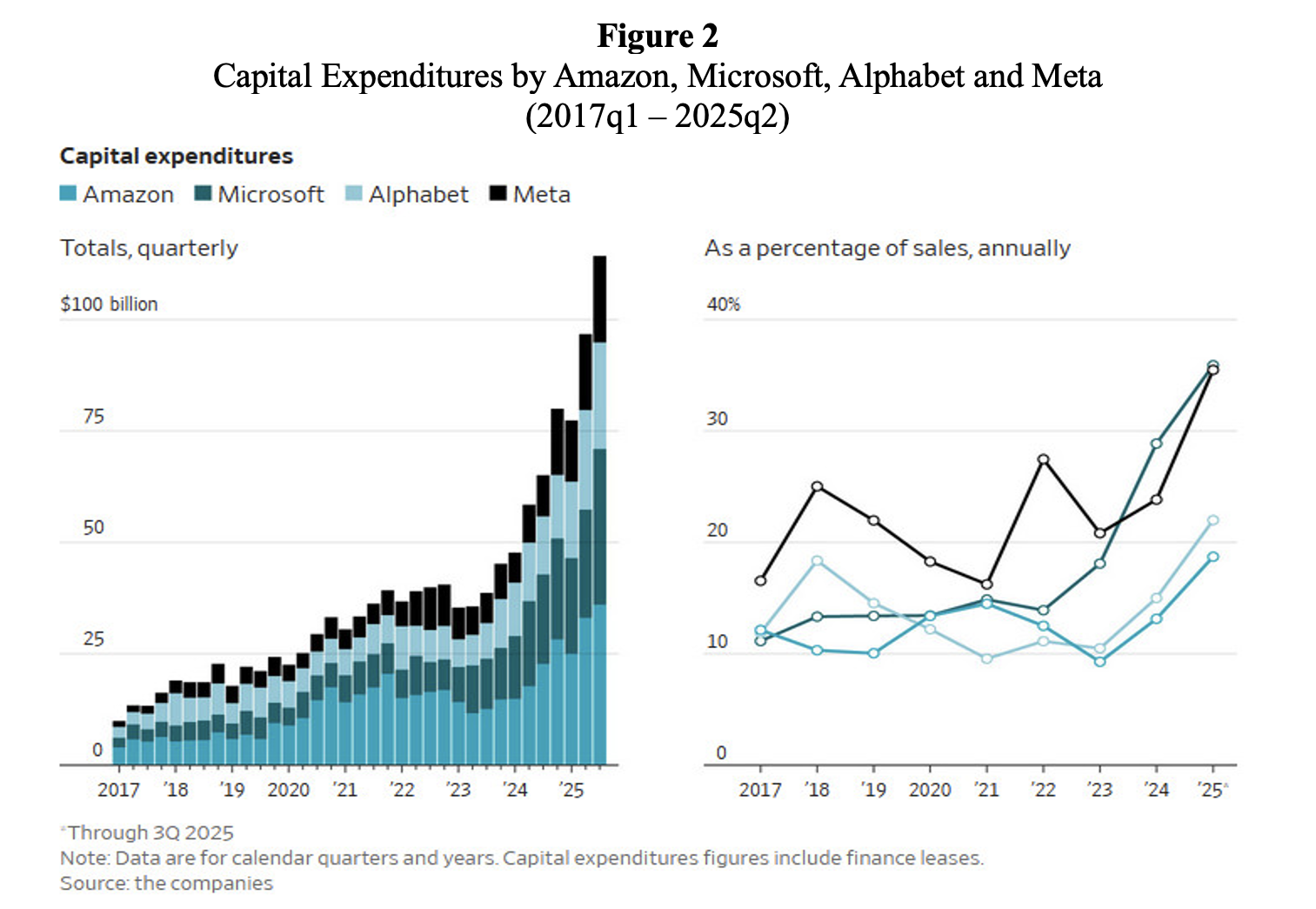

Nonetheless, the AI get together remains to be in full swing. AI corporations are racing to construct out information centre infrastructure for what they consider is nearly limitless demand for AI companies. Capital expenditures on data-centre infrastructure by Amazon, Alphabet, Meta and Microsoft are steeply rising (additionally as a proportion of gross sales) (Determine 2).

JP Morgan Chase & Co tasks that 122GW of information centre capability will likely be constructed from 2026-2030 to fulfill the (arguably) astronomical demand for ‘compute’ (Wigglesworth 2025a). The extra 122GW of information centre capability is estimated to price between $5-7 trillion. For 2026, the projected information centre funding wants will likely be round $700 billion, which, in line with the report, may most likely be totally financed by hyper-scaler money flows and by Excessive-Grade bond markets. “Nonetheless, 2030 funding wants are in extra of $1.4 trillion, which can surpass present market capabilities, necessitating the seek for various funding sources.”

Supply: Christopher Mims (2025), ‘When AI Hype Meets AI Actuality: A Reckoning in 6 Charts.’ Wall Avenue Journal, November 14.

Crucially, many of the mega-financing offers are remarkably round. To present you a flavour: Nvidia invests in OpenAI and OpenAI is trying to purchase tens of millions of Nvidia’s specialised chips. OpenAI buys computing energy from Oracle which buys Nvidia’s GPUs. Nvidia owns about 5% of CoreWeave and sells chips to CoreWeave. CoreWeave’s greatest buyer is Microsoft, which is an investor in OpenAI, shares income with OpenAI, buys chips from Nvidia and has partnerships with AMD. AMD, a rival to Nvidia, was so wanting to land OpenAI as a buyer that it issued warrants for OpenAI to purchase 10% of AMD at a penny a share. OpenAI is a CoreWeave buyer and in addition a shareholder. Nvidia has invested in xAI and can provide it with processors. And so forth and so forth. The offers embrace income sharing throughout the stack and cross-ownership.

Nothing in these round offers is clear. It’s not clear the place the cash wanted for these offers is coming from. It’s not clear what these opaque round transactions indicate for the valuations of the listed and private AI corporations concerned. It’s not clear what all this implies for the competitors over {hardware} between chip producers (Nvidia versus AMD) and over AI companies AI startups (OpenAI versus Anthropic versus xAI versus Microsoft). Not surprisingly, subsequently, these astronomical round financing offers (referencing billions of U.S. {dollars}) are elevating eyebrows. To many observers, they bring about again traumatic recollections of the round financing preparations of the late Nineties, when distributors and purchasers bolstered one another’s dotcom inventory valuations with out producing any actual worth.

AI Bang or Bubble?

AI trade leaders are actually busy doubling down on their message that the AI revolution is NOT A BUBBLE, however actual and sustainable. Nvidia CEO Jensen Huang acknowledged (in an earnings name on November 19) that there is no such thing as a AI bubble and exponentially rising AI demand is structural somewhat than speculative. Huang claims that the AI growth constitutes a Massive Bang, a historic revolution, due to three basic shifts towards accelerated computing: the transfer from CPUs to GPUs (which may concurrently course of a number of duties and options), the rise of generative AI, and the emergence of agentic AI techniques that supposedly can independently make selections based mostly on massive datasets. Asset-managing agency Blackrock concurs: “AI isn’t just a technological development; it represents an infrastructure transformation with rising macroeconomic significance”, including that “not like the speculative frenzy of the late Nineties and early 2000s, at this time’s expertise leaders are anchored by basic stability.” The optimistic vibes across the transformative energy of AI have even contaminated Nouriel Roubini (2025), the career’s perennial Dr. Doom and now a senior financial strategist at Hudson Bay Capital, who has in some way develop into satisfied that the tailwinds of the unprecedented AI data-centre funding growth will overwhelm any disruptions coming from Trump’s tariffs and geopolitical strains.

However the numerous statements by Huang, Blackrock and Roubini are “sound and fury, signifying nothing.” The structural demand for Nvidia’s GPUs originates from the identical circle of corporations which might be betting the enterprise on scaling AI. The AI information centres will not be the “shovels of the AI gold rush”, however somewhat a black gap through which billions of {dollars} disappear.

The surge in demand for GPUs and data-centre infrastructure could, in different phrases, very properly be speculative, if it seems that the AI fashions aren’t delivering good worth for cash to the traders – and can’t stay as much as the exaggerated expectations of the Lords of the AI Ring. Each reader of Kindleberger and Galbraith is aware of that this might not be the primary time in (financial) historical past that most individuals – all in the identical bubble – had it absolutely incorrect, all on the similar time. The monetary disaster of 2008 is simply the latest instance of a collective mania that led to a crash.

The Unbearable Irrationality of the AI Trade

The AI race is usually based mostly on the irrational worry of lacking out (FOMO), in Silicon Valley and on Wall Avenue – which induces a herd mentality to observe ‘momentum’, an entire disregard for basic values in favour of putting an exaggerated significance to the restricted availability of a key useful resource (right here: Nvidia’s GPUs and ‘compute’), and overwhelming affirmation bias (the all-too-human inclination to search for info that confirms our personal biased outlook). To convey dwelling the purpose: the usage of ChatGPT has been discovered to lower concept variety in brainstorming, as per an article in Nature.

It’s deeply ironic that the trade that’s supposed to construct ‘super-intelligence’, a deeply flawed idea with somewhat sinister origins (see Emily M. Bender and Alex Hanna 2025), is itself deeply irrational. However stable anthropological proof on the native tribes residing in Silicon Valley and dealing on Wall Avenue exhibits that this irrationality is hardwired into the perma-adolescent psyches of the inhabitants, who’re wont to speak to one another concerning the coming AIpocalypse, virtually religiously consider in AI prophecies, have deep religion of their algorithms, regard AI as a superior ‘sentient being’ in want of authorized illustration, enthusiastically interact in techno-eschatology, and, above all, are deeply keen on Hobbits and the LOTR. These exact same individuals are additionally used to speak about and assume by way of billions of {dollars} as simply ‘stuff’ that funds compute, needed for scaling to be able to attain AGI. “I don’t care if we burn $50 billion a 12 months, we’re constructing AGI,” Sam Altman mentioned, including: “We’re making AGI, and it’ll be costly and completely price it.” The identical Sam Altman misplaced his cool throughout an interview with podcaster and OpenAI investor Brad Gerstner, when he was requested the way it all is meant so as to add up, given OpenAI’s miniscule income. “If you wish to promote your shares, I’ll discover you a purchaser,” a taken-aback Altman replied curtly. “Sufficient.”

There are extra indicators of irrationality within the AI trade. Within the first six months of 2025, AI start-ups that haven’t any earnings, no gross sales, no pitch and no product to talk of, have been securing billions of {dollars} of funding. For instance, pre-revenue, pre-product AI firm ‘Protected Superintelligence’, based by Ilya Sutskever, ex-chief scientist at OpenAI, raised $2 billion at a $32 billion valuation in April 2025. Equally, ‘Pondering Machines Lab’, an AI analysis and product firm launched by OpenAI’s former chief expertise officer Mira Murati, raised $2 billion at a valuation of $12 billion from traders equivalent to Nvidia, AMD and Cisco in July 2025. The corporate has not launched a product, has no prospects and has even refused to inform traders what they’re even attempting to construct. “It was probably the most absurd pitch assembly,” one investor who met with Murati mentioned. “She was like, ‘So we’re doing an AI firm with the most effective AI folks, however we will’t reply any questions.”

The perfect AI individuals are in cost, or so that they inform us. A handful of labs, led by Tolkienesque techies, management the narrative round frontier LLMs. The narrative they inform the general public is that they’re on a mission to construct AGI to profit all of humanity, however this rising autonomous super-intelligence is so advanced and doubtlessly harmful, even apocalyptic. Anthropic’s chief scientist Jared Kaplan is barely the most recent in a protracted line of AI-experts sounding the alarm; or think about Geoffrey Hinton who predicts, as soon as extra, the entire breakdown of society as soon as AI will get smarter than folks. The overblown claims go like this: “It might develop into inconceivable for people to receives a commission to do work as a result of the superintelligence may do it higher and cheaper. You and I’d not have jobs. No person would have jobs.” The message is evident: reaching AGI is Very Severe Stuff, and Doubtlessly Harmful. However relaxation assured: the AI-developers can safely deal with these dangers they usually have the experience to resolve what counts as ‘protected’. Reaching AGI can even want gigantic data-centres, land, electrical energy and water (for cooling), however don’t fear: the promised outcomes will likely be transformative and a profit for all. AGI will discover cures for most cancers, uncover methods to finish starvation, clear up the affordability disaster, empower college students and staff, and in some way additionally clear up local weather change. Simply belief us, they inform us. Give us the sources we have to construct AGI.

However what they inform their traders is altogether completely different: we’re constructing expertise that may “do primarily what you’ll pay us for”, together with making staff redundant by automating jobs or turning them into gig staff whereas placing them underneath company surveillance. Emily M. Bender and Alex Hanna (2025) recount, for instance, how the Nationwide Consuming Issues Affiliation within the U.S. changed their hotline operators with a chatbot days after the previous voted to unionise. AI algorithms additionally work efficiently to assist landlords push the best potential rents on tenants. Likewise, the medical insurance trade makes use of AI automation and predictive applied sciences to systematically deny sufferers protection for needed medical care. AI additionally works for the navy: defence firm Anduril builds autonomous drones, digital actuality headsets, and different AI-powered applied sciences for the U.S. navy. And personal fairness corporations are hiring AI folks to undergo the businesses they personal and see how these must be restructured.

This manner, the AI trade has satisfied the monetary sector, the cash-rich platform firms and rich enterprise capitalists to speculate their money in funding the coaching and inference of the LLMs and the giga-data-centre infrastructure, that’s driving the present AGI growth within the U.S. In a brand new Working Paper, I argue that this AI data-centre funding growth is a bubble which can pop, most likely somewhat prior to later. It will show to be socially pricey to the bigger U.S. economic system, not simply due to the inevitable correction, crash and recession, however extra essentially, by way of the scarce sources which were and will likely be wasted on the hallucinogenic pipe desires of some entitled Ayn Randian billionaire tech brothers and sisters, who, fairly in character and as was famous already above, have begun to hedge their bets by begging the taxpayer for subsidies and authorities mortgage ensures (Cooper 2025). The AI bubble will finally pop for the next 4 causes.

The Income Delusion

There is no such thing as a world through which the big spending in information centre infrastructure (greater than $5 trillion within the subsequent 5 years) goes to repay; the AI-revenue projections are pie-in-the-sky due to the next:

- The prices of coaching AI fashions and the price of inference are rising. It’s significantly painful that compute demand is scaling quicker than the effectivity of the instruments that generate it. Consequently, the (operational) prices of inference proceed to rise quicker than the revenues of the AI corporations. That is proven by the very intelligent monetary detective work executed on OpenAI’s quarterly income and inference prices by Ed Zitron. Actually, the expansion charge for AI’s compute demand (which is pushed by the trade’s perception that the larger the dimensions of AI computing energy, the higher will likely be its output) is greater than twice the speed of Moore’s regulation. Consequently, the demand for GPUs by the AI trade is hovering and Nvidia, which has a near-monopoly market share of 94% in GPUs, can cost excessive costs for the latest chips or excessive charges for leasing the GPUs to the AI corporations.

- AI’s inference prices are rising (not declining), regardless that the price per million tokens of LLM inference has declined by round 98% — from $20 per million tokens in late 2022 to round $0.40 per million tokens in August 2025 (Barla 2025). Nonetheless, the AI trade makes use of significantly bigger coaching information units now than one or two years in the past, and in addition consumes extra tokens due to test-time computing. Take a look at-time computing is the most recent strategy to scaling LLMs, which permits for longer context home windows and greater ideas from the fashions. Consequently, a typical enterprise question in 2021 used fewer than 220 tokens; by 2025, fashions equivalent to GPT-4 Professional and ChatGPT-5 course of round 22,000 tokens in a single alternate based mostly on test-time computing. On the present charge of enlargement, tokens per question may rise to between 150,000 and 1,500,000 by 2030, relying on activity complexity. In impact, with a quickly declining price per token and a a lot bigger improve in token consumption per generated phrase, AI utility inference prices have grown about 10 occasions during the last two years (see Working Paper). Scaling could be very pricey, in different phrases.

- The unhappy reality is that prospects are unlikely to pay (sufficient) for the somewhat modest companies provided by the LLMs and given the eventual oversupply of LLM companies. Solely 5% of OpenAI customers (circa 4 million customers) presently are paying subscribers, paying (a minimal of) $20 per thirty days. 95% of on a regular basis customers use the free product and stay unconvinced concerning the worth of AI-powered units and companies. More cash from utilization comes from the 1.5 million enterprise prospects which might be utilizing ChatGPT. Nonetheless, enterprises have the choice to make use of less expensive Chinese language LLMs, equivalent to DeepSeek and Alibaba Cloud, which obtain efficiency ranges similar to main American AI fashions and proceed to slash costs. The person price per 1 million tokens is $0.14 for DeepSeek R1 in comparison with $7.50 for ChatGPT o1. For instance the distinction: A information web site that makes use of AI for automated article summaries and processes 500 million tokens per thirty days, would pay $70 for DeepSeek R1 versus $3,750 for ChatGPT o1. The associated fee distinction is appreciable and inconceivable to justify by way of any superior efficiency of the OpenAI device. Worth competitors will drive down person prices charged by U.S. AI corporations, which fatally undermines their enterprise mannequin. OpenAI has simply has simply declared Code Purple, after shedding 6% of its customers in per week attributable to Google’s new Gemini-3 mannequin. By any affordable measure OpenAI has squandered the sizable lead it as soon as had. If traders resolve to decide out, it is going to be inconceivable to maintain the operation afloat and OpenAI’s predicted valuation may drop, maybe dramatically – as occurred with WeWork, the worth of which dropped quickly from its peak of $47 billion to near-bankruptcy. If OpenAI goes down, the inventory value of Nvidia will as properly drop.

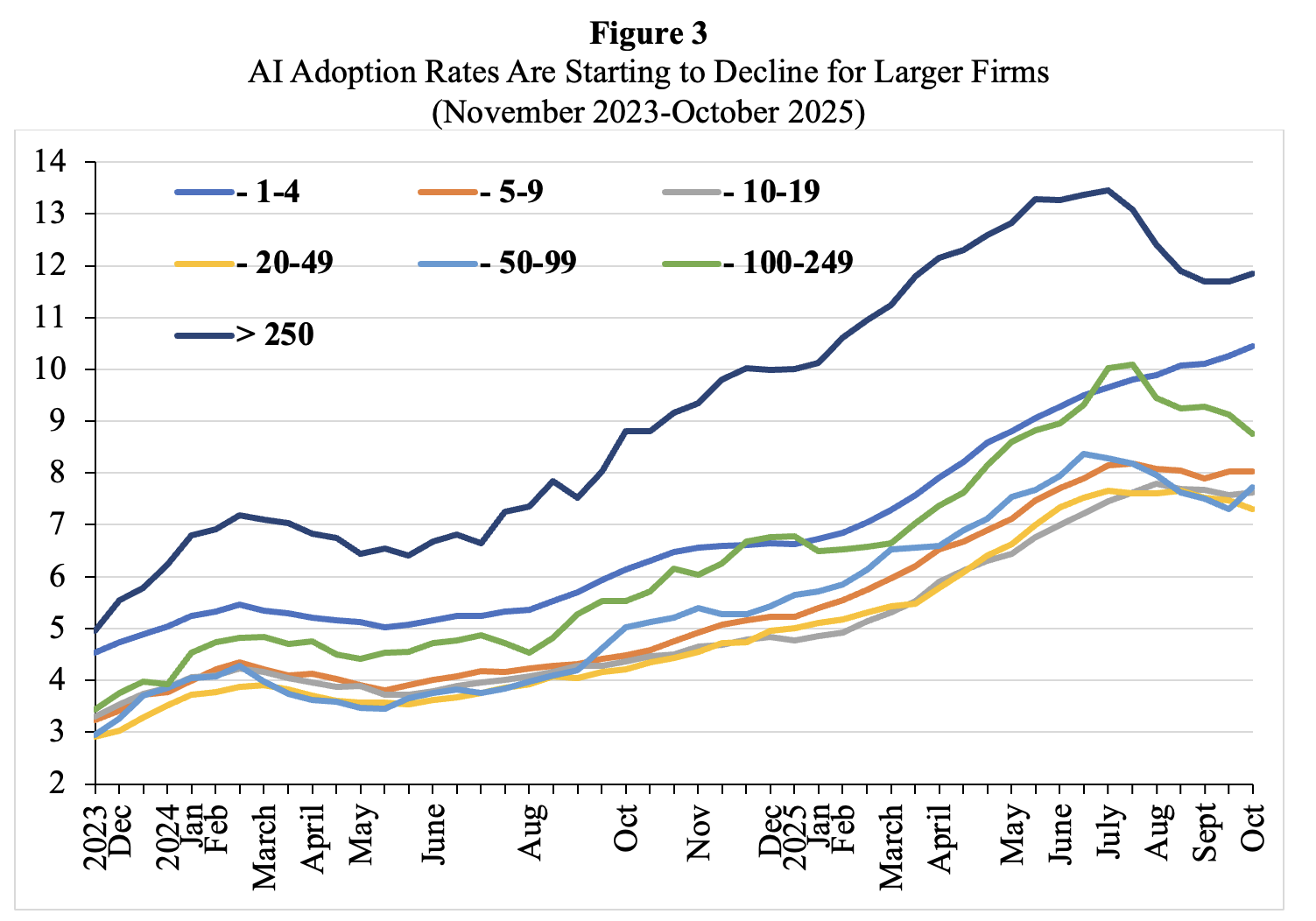

- Lastly, person satisfaction with the efficiency of AI instruments is stagnating and even already declining. Enterprise prospects have gotten much less obsessed with their AI instruments. Everyone has already heard concerning the MIT research that confirmed solely 5% of corporations had been getting a return on generative AI funding. McKinsey (2025) simply ran a research, and the outcomes weren’t a lot completely different,. McKinsey (2025) finds that two-thirds of corporations are simply on the piloting stage. Practically two-thirds of respondents mentioned their organizations haven’t but begun scaling AI throughout the enterprise. And solely about one in 18 corporations are what the consulting agency calls “excessive performers” which have deeply built-in AI and see it driving greater than 5% of their earnings. The issue is the combination of the AI instruments in workflows and organisation. Current U.S. Census Bureau information by agency measurement present that AI adoption has been declining amongst corporations with greater than 250 workers (Determine 3).

Therefore, most AI corporations will fail to show a revenue, as costs will fall (due to Chinese language competitors), whereas (coaching and inference) prices go up because of the scaling technique.

Supply: U.S. Census Bureau, Enterprise Developments and Outlook Survey (BTOS) 2023-2025. Notes: The U.S. Census Bureau conducts a biweekly survey of 1.2 million corporations. Companies are requested whether or not they have used AI instruments equivalent to machine studying, pure language processing, digital brokers or voice recognition to assist produce items or companies previously two weeks. See Torsten Sløk (2025b), https://www.apolloacademy.com/ai-adoption-rate-trending-down-for-large-companies/

The Ticking Time-Bomb of Hyperscale Borrowing

There is no such thing as a method through which the AI trade can fund its capital expenditures out of revenues from paid subscribers or cash from sovereign wealth funds. Therefore, AI corporations should resort to hyperscale borrowing from banks and investment-grade bond markets to fund their capex, laying the foundations for the following debt disaster. This hyperscale borrowing will create a ticking time bomb on the stability sheets of AI corporations, as a result of the core capital expenditure is on specialised GPUs and servers, which — due to unrelenting technological progress — danger changing into economically out of date inside two or three years.

Nvidia isn’t serving to on this respect, as a result of it’s constructing new era GPUs every 12 months and GPU costs are possible falling. Nvidia’s newest era Blackwell GPU’s require totally new servers, and for those who use lots of them, a wholly new information centre, as a result of the Blackwell GPUs require far more energy and cooling. The speed of financial decay of the AI compute infrastructure, subsequently, is excessive and the payback durations are correspondingly brief. “You’re investing in one thing that could be a perishable good,” economist David McWilliams instructed Fortune, calling AI {hardware} “digital lettuce” that’s “going to go off now.” Information servers, networking gear and storage units have a helpful lifetime of 3-5 years and a corresponding annual depreciation charge of 20%-30%. These chips aren’t general-purpose compute engines; they’re purpose-built for coaching and working generative AI fashions, tuned to the precise architectures and software program stacks of some main suppliers equivalent to Nvidia, Google, and Amazon. These chips are a part of purpose-built AI information centres — engineered for excessive energy density, superior cooling, and specialised networking. Collectively, they kind a closed system optimised for scale however onerous to repurpose.

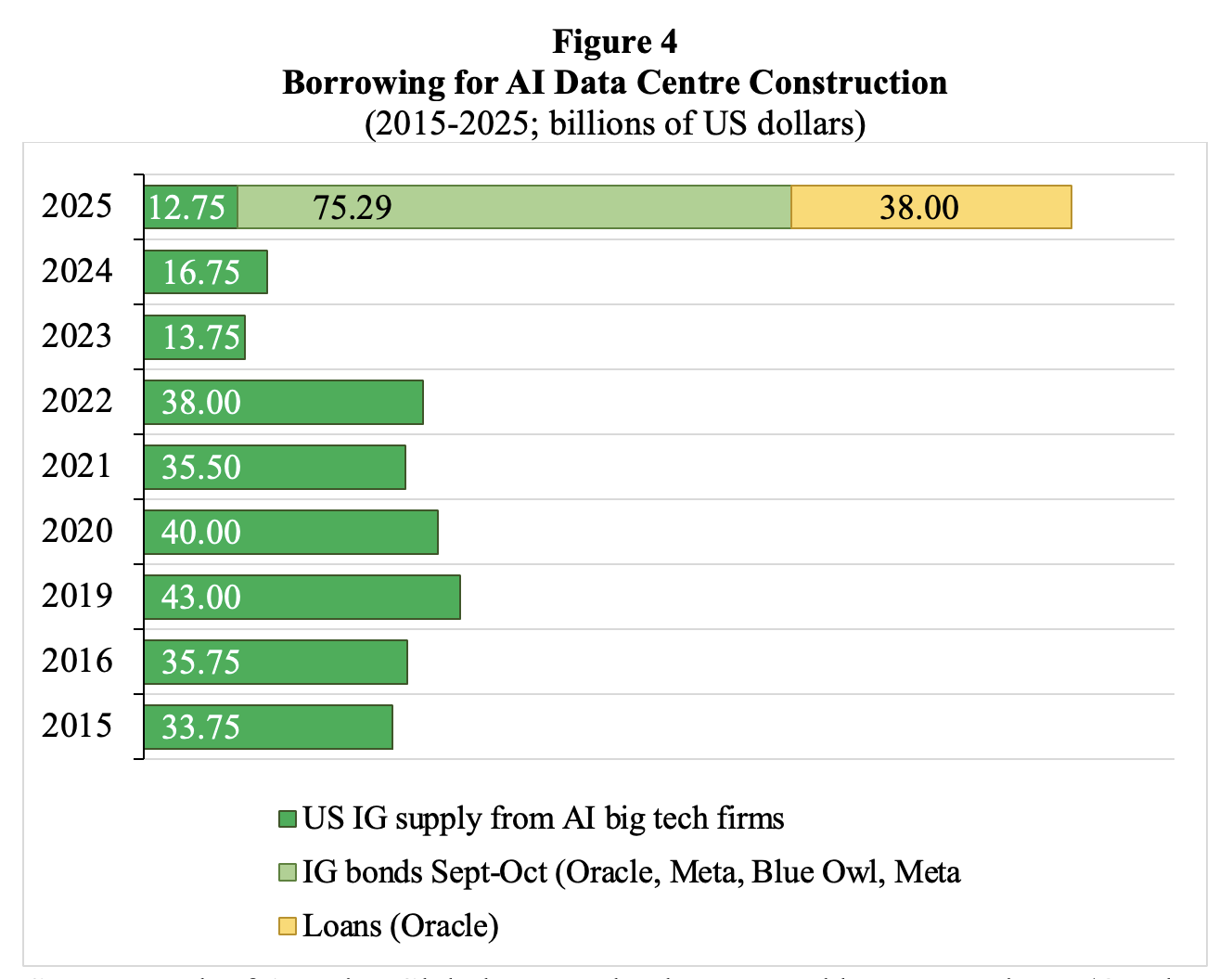

Determine 4 exhibits borrowing for AI data-centre building. Funding-grade (IG) borrowing by AI large tech corporations throughout September-October 2025 amounted to $75 billion — in comparison with $32 billion on common per 12 months throughout 2015-2024. IG bonds issued by the AI corporations make up 14% of the American IG bond market in October 2025. Barclays estimates that cumulative AI-related funding may attain the equal of greater than 10% of U.S. GDP by 2029, in comparison with circa 6% within the first six months of 2025.

OpenAI, Anthropic and different startups proceed to lose cash, and should fund many of the deliberate funding by promoting off items of themselves to traders and by resorting to hyperscale borrowing from banks and investment-grade bond markets. Ignoring quite a few crimson flags, significantly working and monetary leverage and the brief pay-back durations, nearly each Wall Avenue participant is angling to get a slice of the motion, typically through off-balance-sheet Particular Function Automobiles (SPVs), from banks equivalent to JPMorgan Chase and Morgan Stanley to asset managers equivalent to BlackRock and Apollo International Administration. This Wall Avenue frenzy will in all probability lay the foundations for the subsequent debt disaster (Fitch 2025).

Supply: Financial institution of America International Analysis; chart created by Lucy Raitano (October 31 2025). Notes: IG = funding grade. The info for Blue Owl and Meta seek advice from a project-style holding firm created by Blue Owl Capital to spend money on a large-scale Hyperion information centre three way partnership with Meta.

Exponential Progress in an Analogue World

It will likely be inconceivable to construct the projected information centre infrastructure within the subsequent 5 years or so (which is the horizon of most AI traders). The lead time needed to construct a hyperscale information centre is presently round 2 years, however anticipate it to develop into for much longer, say 7 or extra years. Why? Upstream suppliers to the expansion in information centres — the established industrial corporations — need to broaden manufacturing. These upstream suppliers will run into labour shortages, lengthy ready occasions for energy grid connections, materials bottlenecks and regulatory blowback – and all this may lengthen the lead occasions needed to construct a hyperscale information centre, as defined in additional element within the Working Paper.

FT Alphaville (2025) additional notes that the character of the AI-related energy demand is especially problematic (Wigglesworth 2025a). It cites a latest Nvidia (2025) report:

“Not like a conventional information heart working hundreds of uncorrelated duties, an AI manufacturing unit operates as a single, synchronous system. When coaching a big language mannequin (LLM), hundreds of GPUs execute cycles of intense computation, adopted by durations of information alternate, in near-perfect unison. This creates a facility-wide energy profile characterised by large and speedy load swings. This volatility problem has been documented in joint analysis by NVIDIA, Microsoft, and OpenAI on energy stabilization for AI coaching information facilities. The analysis exhibits how synchronized GPU workloads may cause grid-scale oscillations. The facility draw of a rack can swing from an “idle” state of round 30% to 100% utilization and again once more in milliseconds. This forces engineers to oversize elements for dealing with the height present, not the typical, driving up prices and footprint. When aggregated throughout a whole information corridor, these risky swings — representing lots of of megawatts ramping up and down in seconds — pose a major risk to the steadiness of the utility grid, making grid interconnection a main bottleneck for AI scaling.”

AI Scaling is Hitting a Wall

The strategic wager of main AI corporations that Generative AI could be achieved by constructing ever extra information centres and utilizing ever extra chips is already going unhealthy. This scaling technique is already exhibiting diminishing returns. It’s the incorrect technique, since generic LLMs aren’t constructed on correct and sturdy world fashions, however as an alternative are constructed to autocomplete, based mostly on subtle pattern-matching (Shojaee et al. 2025). LLMs will proceed to make errors and hallucinate, particularly when used outdoors their coaching information. Generic AI merchandise are by no means going to really work proper and can proceed to be untrustworthy.

Three years into the LLM wave, AI-expert Gary Marcus explains very clearly why ChatGPT has not lived as much as expectations: “The outcomes are disappointing as a result of the underlying tech is unreliable. And that’s been apparent from the beginning.” Generic LLMs are onerous to manage; they nonetheless can’t motive reliably and by no means will; they nonetheless don’t work reliably with exterior instruments; they proceed to hallucinate; they nonetheless can’t match area particular fashions, they proceed to battle with alignment between what human beings need and what machines really do. “The reality is that ChatGPT has by no means grown up,” Marcus concludes.

Marcus additionally presents disturbing new analysis on the efficiency of frontier LLMs throughout three benchmarks by CMU professor Niloofar Mireshgalleh. The primary benchmark is a math check that would plausibly be answered by massive portions of information. Unsurprisingly, the frontier LLMs carry out more and more properly – however, as we noticed, at the price of gigantic volumes of compute. On the second benchmark (which is concentrated on coding), preliminary progress in efficiency is now tailing off, exhibiting diminishing returns. Nonetheless, as Marcus explains, on the third benchmark, “on a fancy activity combining concept of thoughts and privateness that appears tougher to sport”, the frontier LLMs present gradual linear progress – once more, at the price of billions of {dollars} and large portions of electrical energy and water. In sum, progress in efficiency on advanced duties is simply terribly gradual – which is the important thing issue explaining the frustration of customers of LLMs and the bounds to worthwhile adoption by enterprises.

LLMs are nice at producing believable output – whereas being a lot much less good at getting their details straight, whereas being incapable of reasoning. The hardwired inclination to hallucinate (Metz and Weise 2025) limits the usefulness of AI in high-stakes actions equivalent to healthcare, training and finance. Potential liabilities ensuing from the hurt executed by the choices of autonomous unsupervised AI instruments are just too massive in these high-stake actions — and this may prohibit the adoption of and reliance on such AI instruments. Extra typically, now we have to think about LLMs, as Bender and Hanna (2025), recommend, as “artificial text-extruding machines”. “Like an industrial plastic course of,” they clarify, textual content databases “are pressured by means of sophisticated equipment to supply a product that appears like communicative language, however with none intent or pondering thoughts behind it”. The identical is true of different “generative” AI fashions that spit out photos and music. They’re all, the authors say, “artificial media machines” – or big plagiarism machines. “Each language fashions and text-to-image fashions will out-and-out plagiarize their inputs,” the authors write. A big fraction of the output will likely be AI-slop.

In an ironic twist, the availability of AI-slop will solely improve in future, as a result of because of the lack of ‘genuine coaching information’, LLMs will improve their enter of ‘artificial’ AI-generated synthetic information — an unbelievable act of self-poisoning. The extra AI-slop these fashions ingest, the larger the probability that their outputs will likely be junk: the “garbage-in, garbage-out” (GIGO) precept does maintain. AI techniques, that are educated on their very own outputs, steadily lose accuracy, variety, and reliability. This happens as a result of errors compound throughout successive mannequin generations, resulting in distorted information distributions and irreversible defects in efficiency. Veteran tech columnist Steven Vaughn-Nichols warns that “we’re going to speculate increasingly more in AI, proper as much as the purpose that mannequin collapse hits onerous and AI solutions are so unhealthy even a brain-dead CEO can’t ignore it.”

Conclusion

Due to these 4 causes, AI’s ‘scaling’ technique will fail and the AI data-centre funding bubble will pop. The unavoidable AI-data-centre crash within the U.S. will likely be painful to the economic system, even when some helpful expertise and infrastructure will survive and be productive within the longer run. Nonetheless, given the unrestricted greed of the platform and different Massive Tech firms, this can even imply that AI instruments that weaken the labour circumstances — in actions together with the visible arts, training, well being care and the media — will survive. Equally, generative AI is already entrenched in militaries and intelligence companies and can, for certain, get used for surveillance and company management. All the massive guarantees of the AI trade will fade, however many dangerous makes use of of the expertise will stick round.

The speedy financial hurt executed will look somewhat insignificant in comparison with the long-term injury of the AI mania. The continual oversupply of AI slop, LLM fabricated hallucinations, clickbait pretend information and propaganda, deliberate deepfake photos and limitless machine-made junk, all produced underneath capitalism’s banner of progress and greed, consuming a great deal of power and spouting tonnes of carbon emissions will additional undermine and self-poison the belief in and the foundations of America’s financial and social order. The large direct and oblique prices of generic LLMs will outweigh the somewhat restricted advantages, by far.

[ad_2]